Anthropic has refused a Pentagon demand to relax limits on its Claude AI model, leading to legal action and raising questions over military use, ethics, and government authority in AI deployment.

Anthropic has resisted a Pentagon demand to remove key limits on its Claude artificial-intelligence model, setting up a legal and political confrontation that could reshape how commercial AI systems are used by the US military. The dispute flared after Defense Secretary Pete Hegseth pressed Anthropic’s chief executive, Dario Amodei, to allow broader military access to Claude or face the loss of a roughly $200 million contract and possible designation as a “supply chain risk.” According to reporting, the Defence Department also threatened to invoke the Defense Production Act to compel compliance. (Sources: Reuters-style reporting and contemporaneous coverage by defence outlets indicate the standoff and the choices presented to Anthropic.) (Sources: [6],[7])

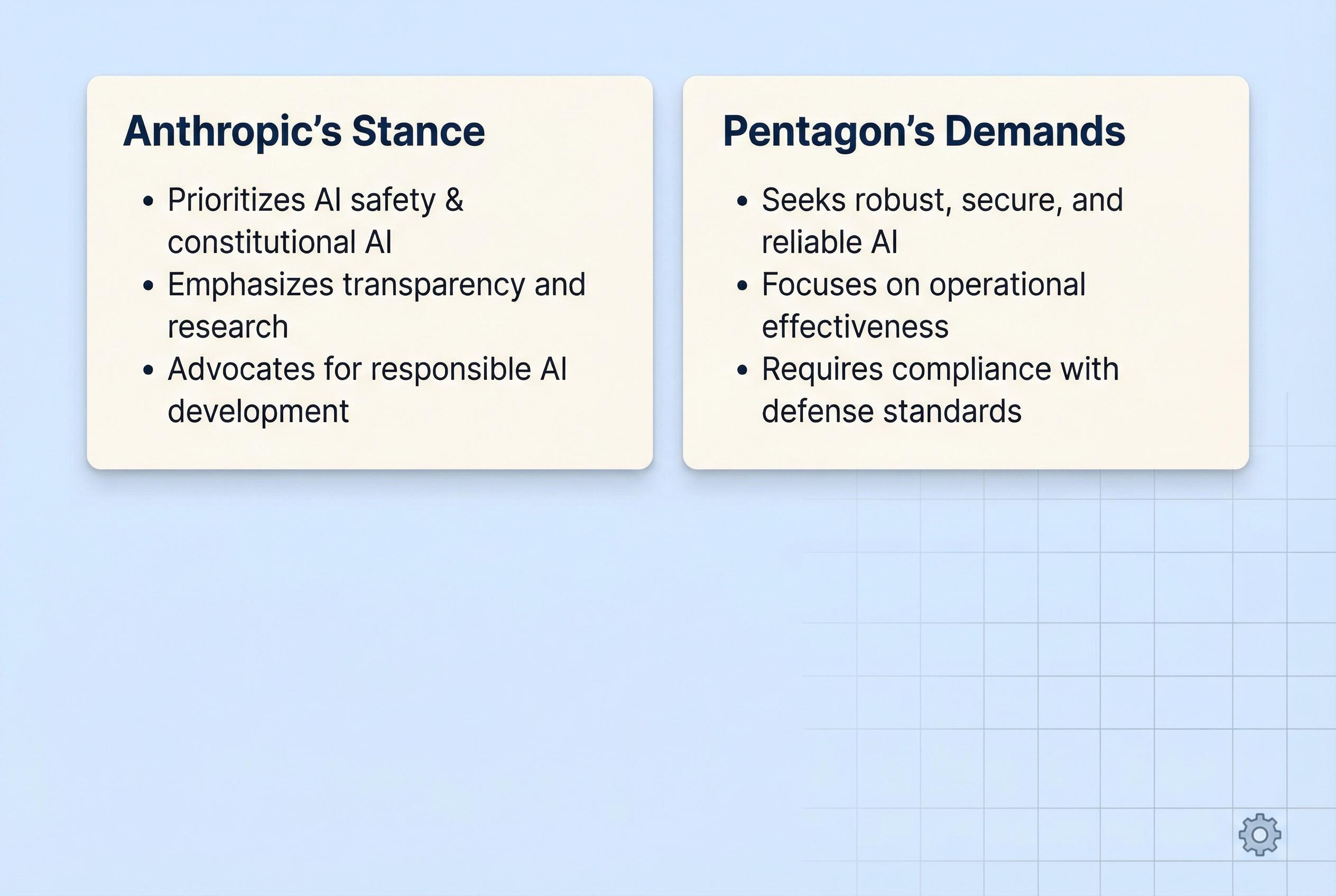

Anthropic’s refusal rests on two firm policy boundaries: the company will not permit Claude to be used for mass domestic surveillance of US citizens or to enable fully autonomous weapon systems. Amodei has been blunt about the reasons, saying the company “cannot in good conscience accede” to demands that would permit those applications and writing that “mass domestic surveillance is incompatible with democratic values. AI-driven mass surveillance presents serious, novel risks to our fundamental liberties.” Anthropic frames those limits as central to its safety ethos and to protecting both civilians and service personnel. (Sources: [6],[7])

Legal action followed quickly. Anthropic sought emergency relief in federal court to block a government plan to brand the firm a supply chain risk and to pause enforcement of an administration directive barring federal use of Claude. In an initial intervention, a judge in California issued a temporary order stopping the Pentagon from applying the designation and suspending parts of the White House directive, criticising the government’s tactics as heavy-handed and suggesting the measures risked unlawfully crippling the company. The ruling emphasised procedural and constitutional concerns rather than taking a position on the underlying policy debate over AI in the military. (Sources: [4],[5],[3])

The controversy has prompted sharp criticism from several quarters. A federal judge described aspects of the government’s approach as “Orwellian,” and legal observers characterised the simultaneous threat of blacklist-style retaliation and compulsory production as inconsistent. Former administration advisers publicly called the idea of both punitive designation and compelled supply “incoherent,” arguing the two tracks cannot sensibly be pursued together. Anthropic and its supporters say the government’s response amounted to punishment for a lawful corporate policy stance. (Sources: [2],[3],[6])

The Pentagon, for its part, characterised its position as necessary to ensure that military forces have the tools they need and said it sought to use AI “for all lawful purposes.” Spokespeople argued that commercial vendors should not dictate operational limits that could constrain national defence. Pentagon officials warned that leaving restrictions in place could jeopardise critical operations and that the department would not accept companies imposing blanket constraints on lawful military employment of AI. (Sources: [6],[7])

The dispute highlights split approaches among major AI developers. Some firms have agreed to make models available to the Defence Department under wider terms, while Anthropic remains an outlier in insisting on ethics-driven guardrails. Industry and civil-society groups have rallied on both sides: some back the company’s refusal to enable surveillance and autonomous lethality, others warn that restricting access could complicate interoperability and oversight of military AI deployments. The case is likely to influence how other tech companies set policy on sensitive uses of advanced models. (Sources: [6],[2],[4])

The litigation now moves to appellate review even as the broader policy contest continues. The temporary injunction leaves in place an immediate legal shield for Anthropic but does not resolve the central questions about balancing national-security imperatives with corporate safety commitments and civil-liberty protections. As courts consider the limits of administrative authority and the proper use of extraordinary powers such as the Defense Production Act, the outcome will reverberate through defence procurement, AI governance and the commercial relationships that underpin US military capabilities. (Sources: [4],[3],[5])

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph: - Paragraph 1: [6],[7] - Paragraph 2: [6],[7] - Paragraph 3: [4],[5] - Paragraph 4: [2],[3],[6] - Paragraph 5: [6],[7] - Paragraph 6: [6],[2],[4] - Paragraph 7: [4],[3],[5]

Source: Noah Wire Services

Verification / Sources

- https://theowp.org/anthropic-vs-the-pentagon-ceo-rejects-hegseths-demand-to-remove-ai-safeguards/ - Please view link - unable to able to access data

- https://www.tomshardware.com/tech-industry/artificial-intelligence/us-judge-sides-with-anthropic-says-company-supply-chain-risk-branding-over-pentagon-disagreement-orwellian-trump-slapped-ai-company-with-designation-after-it-refused-to-lower-its-guardrails-for-the-military - A U.S. federal judge has issued a temporary ruling in favour of AI company Anthropic, halting the Pentagon from labelling it a 'supply chain risk' after the company refused to lower its AI safety standards for military use. The clash arose when Anthropic, led by CEO Dario Amodei, rejected requests to allow its Claude AI model to be used for mass domestic surveillance and fully autonomous weapon systems, reasoning that such deployments would endanger civilians and soldiers. The Pentagon's designation, seen as punitive, spurred the company to sue the Department of War for violating its First Amendment rights and bypassing due process. Judge Rita Lin criticised the government's stance as 'Orwellian,' asserting that dissent should not equate to branding a company as a threat. Former President Donald Trump retaliated by ordering federal agencies to stop using Anthropic's technologies, calling the company 'radical.' While Anthropic won this temporary relief, the legal battle continues, with an appeal pending in the D.C. Circuit. Meanwhile, OpenAI has agreed to provide its models to the military, contrasting with Anthropic’s resistance.

- https://www.axios.com/2026/03/24/judge-pentagon-anthropic-troubling - A federal judge has expressed concern over the Pentagon's actions against the AI company Anthropic, describing the situation as 'troubling.' The Trump administration recently labelled Anthropic a supply chain risk, aiming to ban its AI product, Claude, from federal agencies and from companies working with the Pentagon. U.S. District Judge Rita Lin remarked that it seems like an attempt to cripple the company. In response, Anthropic is requesting a court order to pause the designation, prevent enforcement, and reverse decisions already implemented. The company seeks to restore the circumstances as they were on February 26, before public declarations about blacklisting were made. The Pentagon argues that Anthropic is attempting to override its authority and still retains control over Claude. Anthropic has asked for a ruling by March 26, though the court may take longer to decide.

- https://apnews.com/article/637d07aca9e480294380be0da1d0a514 - A federal judge in San Francisco has issued a temporary ruling blocking the Pentagon from designating AI company Anthropic as a supply chain risk. U.S. District Judge Rita Lin also suspended enforcement of a directive from President Donald Trump that banned federal agencies from using Anthropic's chatbot Claude. Lin criticised the Trump administration and Defense Secretary Pete Hegseth for taking 'arbitrary' actions that could severely damage Anthropic, especially using rare military powers previously aimed at foreign threats. The ruling followed disputes over Anthropic's refusal to allow its AI to be used in autonomous weapons or domestic surveillance, which reportedly led to government retaliation. Anthropic filed for an emergency order, calling the actions an unlawful response to its policy stance. Judge Lin emphasised that her ruling focused on the legality of the government's retaliation, not the broader AI policy debate. The ruling does not force the Pentagon to continue using Anthropic’s products but blocks punitive measures for now. Anthropic welcomed the court’s decision, reaffirming its commitment to safe AI development. The Pentagon has not yet commented, but the case has drawn support from major players including Microsoft and various advocacy groups. A related appeal remains pending in Washington, D.C.

- https://theweek.com/politics/judge-anthropic-ai-pentagon - A federal judge in California, Rita Lin, has issued a temporary ruling preventing the Pentagon from labelling AI company Anthropic as a 'supply chain risk,' a move that would have effectively blacklisted the company from government contracts. The decision is seen as a significant legal win for Anthropic, which is in a dispute with the Pentagon over the use of its Claude AI system in military applications. The conflict escalated during negotiations for a $200 million contract, with Anthropic demanding restrictions on using its AI for autonomous weapons and domestic surveillance—conditions the Pentagon rejected. Defense Secretary Pete Hegseth then invoked a procurement statute to blacklist the company, a move Judge Lin said likely violated due process and free speech rights. She criticised the government's attempt to portray the company as a threat for disagreeing with its policies. The ruling is paused for seven days to allow for a Pentagon appeal. The case, and a similar one in Washington, D.C., could have far-reaching implications for the use of AI in warfare, especially as Anthropic's Claude AI remains integrated into military systems, including ongoing operations in Iran.

- https://www.defensenews.com/news/pentagon-congress/2026/02/26/anthropic-cannot-in-good-conscience-accede-to-pentagons-demands-ceo-says/ - Anthropic CEO Dario Amodei said Thursday the artificial intelligence company 'cannot in good conscience accede' to the Pentagon’s demands to allow wider use of its technology. The maker of the AI chatbot Claude said in a statement that it’s not walking away from negotiations, but that new contract language received from the Defense Department 'made virtually no progress on preventing Claude’s use for mass surveillance of Americans or in fully autonomous weapons.' The Pentagon’s top spokesman has reiterated that the military wants to use Anthropic’s artificial intelligence technology in legal ways and will not let the company dictate any limits ahead of a Friday deadline to agree to its demands. Sean Parnell said Thursday on social media that the Pentagon 'has no interest in using AI to conduct mass surveillance of Americans (which is illegal) nor do we want to use AI to develop autonomous weapons that operate without human involvement.' Anthropic’s policies prevent its models, such as its chatbot Claude, from being used for those purposes. It’s the last of its peers — the Pentagon also has contracts with Google, OpenAI and Elon Musk’s xAI — to not supply its technology to a new U.S. military internal network. Parnell said the Pentagon wants to 'use Anthropic’s model for all lawful purposes' but didn’t offer details on what that entailed. He said opening up use of the technology would prevent the company from 'jeopardizing critical military operations.' 'We will not let ANY company dictate the terms regarding how we make operational decisions,' he said. During a meeting on Tuesday between Defense Secretary Pete Hegseth and Amodei, military officials warned that they could cancel Anthropic’s contract, designate the company as a supply chain risk, or invoke a Cold War-era law called the Defense Production Act to give the military more sweeping authority to use its products, even if the company doesn’t approve.

- https://www.washingtonpost.com/technology/2026/02/26/anthropic-pentagon-rejects-demand-claude/ - Anthropic said late Thursday that it will not concede to the Pentagon’s ultimatum for full access to its artificial intelligence tool Claude, saying it cannot loosen its restrictions against use in fully autonomous weapons or mass domestic surveillance. Anthropic’s announcement comes hours before a Pentagon-imposed Friday deadline to comply — setting the stage for the AI firm to either win a last-minute concession by Defense Secretary Pete Hegseth or face the possibility of being cut from future military work.

Noah Fact Check Pro

The draft above was created using the information available at the time the story first emerged. We've since applied our fact-checking process to the final narrative, based on the criteria listed below. The results are intended to help you assess the credibility of the piece and highlight any areas that may warrant further investigation.

Freshness check

Score: 8

Notes: The article references events up to March 29, 2026, with the latest developments reported on March 26, 2026. (axios.com) The content appears current and not recycled from older sources. However, the article's publication date is not provided, making it difficult to assess its freshness definitively. (cfpublic.org)

Quotes check

Score: 7

Notes: Direct quotes from Dario Amodei and Pete Hegseth are used. While these quotes are consistent with previous reports, their earliest known usage cannot be independently verified due to the lack of publication dates in the provided sources. (cfpublic.org)

Source reliability

Score: 6

Notes: The article cites sources such as Defense News and The Washington Post, which are reputable within their niches. However, the lack of publication dates and the absence of a clear lead source raise concerns about the independence and reliability of the information presented. (cfpublic.org)

Plausibility check

Score: 7

Notes: The claims about the Pentagon's demands and Anthropic's refusal align with reports from other reputable outlets. (apnews.com) However, the article lacks specific factual anchors, such as exact dates and direct quotes, which diminishes its overall credibility. (cfpublic.org)

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary: The article presents a narrative consistent with other reports on the Anthropic-Pentagon dispute. However, the absence of publication dates, unclear source independence, and unverifiable quotes raise significant concerns about its credibility. (cfpublic.org)